When manipulating an unfamiliar object, we use the sense of touch to feel our way around it. We feel even what our eyes cannot reach, like the back of the object in our hands, and this is sufficient for us to recover the object’s shape. By touching, we discover just as much as with our eyes. Furthermore, humans are naturally smart explorers. We may do it subconsciously, but we plan our every movement, especially when gathering new information.

Imagine that you need to pick up an object from the fridge. When vision cannot support your movement, you must use touch to securely grasp your target. The way we generate the first contacts and how we place or adjust our fingers on the object’s surface is all part of a planning process. However, even the most sophisticated robots cannot perform manipulation as humans do, given that robot touch is far more primitive than ours.

By combining efforts of world-leading experts in AI and robotics from the University of Birmingham (UK) and the University of Pisa (IT), our team has successfully built a robotic system capable of exploring unfamiliar objects via tactile exploration. We are the first to propose a method that combines probabilistic AI techniques to track and refine the object’s surface while simultaneously planning for the best exploration action to gather new information.

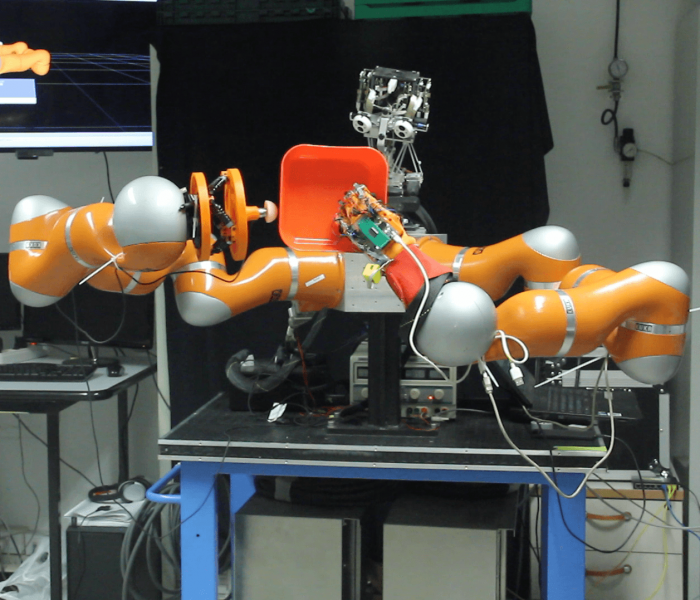

Our approach enables a humanoid robot to explore an object held in one hand and to efficiently retrieve its complete shape with its other. The details of this approach have been released in the paper “GPAtlasRRT: A local tactile exploration planner for recovering the shape of novel objects,” published in the International Journal of Humanoid Robots, and it recently won the College of Engineering and Physical Science’s “Paper of the Month” award.

Our approach works as follows. Thanks to an RGB-D camera mounted on the head of the robot, we collect a visual image of the object. This is the only time the robot will access information through vision. The acquired image is composed of a 2D, colored imaged (RGB) and the associated depth map, which provides a sense of deepness of what the robot sees as a point cloud. By using proprioception, the awareness of your own body, the robot isolates the part of the image that is associated with the object.

To support the subsequent (planning of a) tactile exploration, the robot needs a way to build in its own mind a “rough” model of the whole object’s surface, starting from the acquired visual clues. We use for this a well-known mathematical framework called Gaussian Process (GP). In probability theory, a GP is a stochastic process that collects random variables, in our case, points that belong to the object’s surface, and produce a finite linear combination of probability distributions. The key insight is that each of these distributions is Gaussian: a bell-shaped, symmetric distribution. Since most of the phenomenons in nature are symmetric, GPs are employed for reinforcing symmetry in its own predictions. Nonetheless, GP is aware that symmetry is not always the correct assumption, and in predicting the surface, it also provides a measurement of the uncertainty in that prediction. As a rule of thumb, the closer the predicted surface is to a part of the surface already seen by the robot, the lower is its associated uncertainty.

Figure 1: Vito robot from the University of Pisa executing a step of tactile exploration. Image courtesy Claudio Zito.

The tactile exploration builds on top of the GP’s model of the surface. We use a path planning approach, called AtlasRRT, that is ideal for exploring complex surfaces. An atlas is a small charted area of a larger and more complex surface. As the atlas of the world, these local charts are presented as a flat map. When we need to explore, we make a plan based on these flat maps constructed over the object’s surface, and we move across the object to discover new areas. By moving toward regions of high interest, i.e. the one that our model associates with high uncertainty, we maximize the information gained by the exploration and we minimize the number of paths needed to recover the real surface.

Although the atlas is flat and can be seen as tangential to the surface, our robot moves along the object’s surface so that its finger never detaches from the surface, collecting new points along the followed path that belong to unseen regions. These new points are added to the “rough” model, and the GP is refined with new information to plan the next best exploration path.

The system produces a better shape recovery in a shorter period of time, and it is a new step towards next-generation robots that are more intelligent and more dexterous.

These findings are described in the article entitled GPAtlasRRT: A Local Tactile Exploration Planner for Recovering the Shape of Novel Objects, recently published in the International Journal of Humanoid Robotics.